Research

First-ever successful mind-controlled robotic arm without brain implants

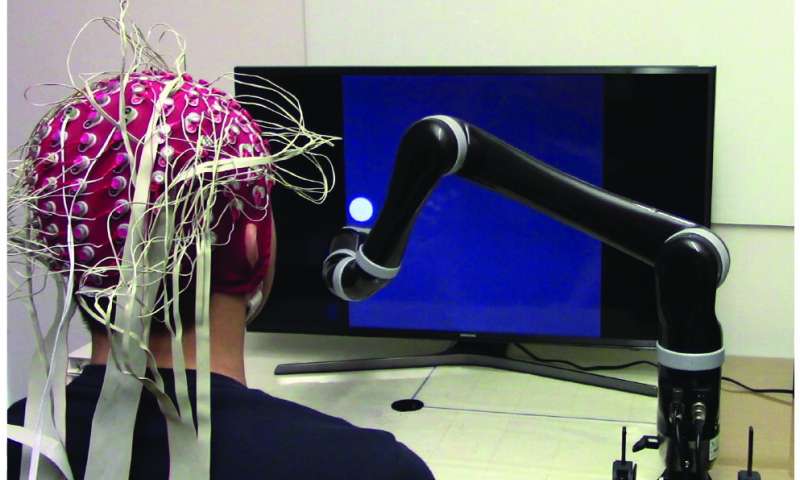

A team of researchers from Carnegie Mellon University, in collaboration with the University of Minnesota, has made a breakthrough in the field of noninvasive robotic device control. Using a noninvasive brain-computer interface (BCI), researchers have developed the first-ever successful mind-controlled robotic arm exhibiting the ability to continuously track and follow a computer cursor.

Being able to noninvasively control robotic devices using only thoughts will have broad applications, in particular benefiting the lives of paralyzed patients and those with movement disorders.

BCIs have been shown to achieve good performance for controlling robotic devices using only the signals sensed from brain implants. When robotic devices can be controlled with high precision, they can be used to complete a variety of daily tasks. Until now, however, BCIs successful in controlling robotic arms have used invasive brain implants. These implants require a substantial amount of medical and surgical expertise to correctly install and operate, not to mention cost and potential risks to subjects, and as such, their use has been limited to just a few clinical cases.

A grand challenge in BCI research is to develop less invasive or even totally noninvasive technology that would allow paralyzed patients to control their environment or robotic limbs using their own “thoughts.” Such noninvasive BCI technology, if successful, would bring such much needed technology to numerous patients and even potentially to the general population.

However, BCIs that use noninvasive external sensing, rather than brain implants, receive “dirtier” signals, leading to current lower resolution and less precise control. Thus, when using only the brain to control a robotic arm, a noninvasive BCI doesn’t stand up to using implanted devices. Despite this, BCI researchers have forged ahead, their eye on the prize of a less- or non-invasive technology that could help patients everywhere on a daily basis.

Bin He, Trustee Professor and Department Head of Biomedical Engineering at Carnegie Mellon University, is achieving that goal, one key discovery at a time.

“There have been major advances in mind controlled robotic devicesusing brain implants. It’s excellent science,” says He. “But noninvasive is the ultimate goal. Advances in neural decoding and the practical utility of noninvasive robotic arm control will have major implications on the eventual development of noninvasive neurorobotics.”

Using novel sensing and machine learning techniques, He and his lab have been able to access signals deep within the brain, achieving a high resolution of control over a robotic arm. With noninvasive neuroimaging and a novel continuous pursuit paradigm, He is overcoming the noisy EEG signals leading to significantly improve EEG-based neural decoding, and facilitating real-time continuous 2-D robotic device control.

Using a noninvasive BCI to control a robotic arm that’s tracking a cursor on a computer screen, for the first time ever, He has shown in human subjects that a robotic arm can now follow the cursor continuously. Whereas robotic arms controlled by humans noninvasively had previously followed a moving cursor in jerky, discrete motions—as though the robotic arm was trying to “catch up” to the brain’s commands—now, the arm follows the cursor in a smooth, continuous path.

In a paper published in Science Robotics, the team established a new framework that addresses and improves upon the “brain” and “computer” components of BCI by increasing user engagement and training, as well as spatial resolution of noninvasive neural data through EEG source imaging.

The paper, “Noninvasive neuroimaging enhances continuous neural tracking for robotic device control,” shows that the team’s unique approach to solving this problem not enhanced BCI learning by nearly 60% for traditional center-out tasks, it also enhanced continuous tracking of a computer cursor by over 500%.

The technology also has applications that could help a variety of people, by offering safe, noninvasive “mind control” of devices that can allow people to interact with and control their environments. The technology has, to date, been tested in 68 able-bodied human subjects (up to 10 sessions for each subject), including virtual device control and controlling of a robotic arm for continuous pursuit. The technology is directly applicable to patients, and the team plans to conduct clinical trials in the near future.

“Despite technical challenges using noninvasive signals, we are fully committed to bringing this safe and economic technology to people who can benefit from it,” says He. “This work represents an important step in noninvasive brain-computer interfaces, a technology which someday may become a pervasive assistive technology aiding everyone, like smartphones.”

Source: https://techxplore.com/news/2019-06-first-ever-successful-mind-controlled-robotic-arm.html